Intelligence everywhere. For everyone.

As part of my work at Human Agency, I led the product design effort from June to October 2025 for Liquid AI to help shape their first developer-facing product: LEAP (Liquid Edge AI Platform).

- Industry

Artificial intelligence

- Team

1 designer (me), 1 UX researcher, 1 developer

- Website

Today, most AI products rely heavily on the cloud. While this makes AI broadly accessible, it also comes with important trade-offs: latency, privacy concerns, limited customization, and a strong dependency on network connectivity.

Liquid AI is a foundation model company based in Boston, building smaller, highly efficient AI models designed to run directly on edge devices — smartphones, laptops, vehicles, and embedded systems. Liquid AI in 2025 it's 3.1M+ model downloads on Hugging Face and $250M+ raised in Series A seed funding.

Designing for edge AI comes with unique constraints:

- Most interactions happen locally, often through a computer terminal, leaving little room for traditional interface design.

- There is little to no telemetry, which makes documentation, guidance, and clarity the real product.

Liquid’s long-term vision is to make AI accessible to anyone, regardless of their technical background.

Before committing to a developer platform, Liquid commissioned third-party research with several hundred developers. The findings validated the need for a centralized hub and directly informed LEAP's roadmap.

Key takeaways:

- Strong demand for edge deployment and offline-first AI

- Developers across all experience levels want to build with AI but need better tooling and clearer pathways

Building a system for scale.

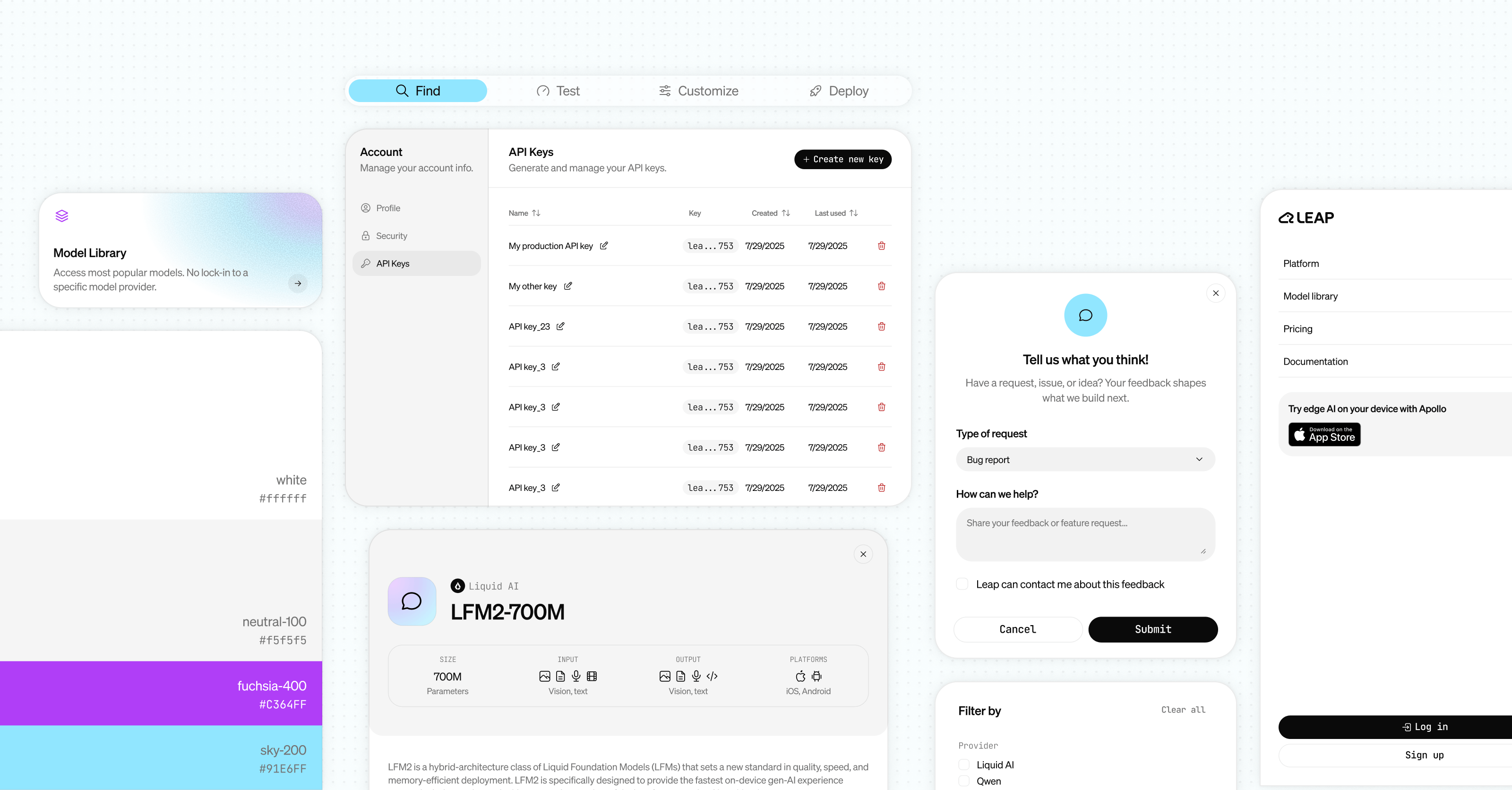

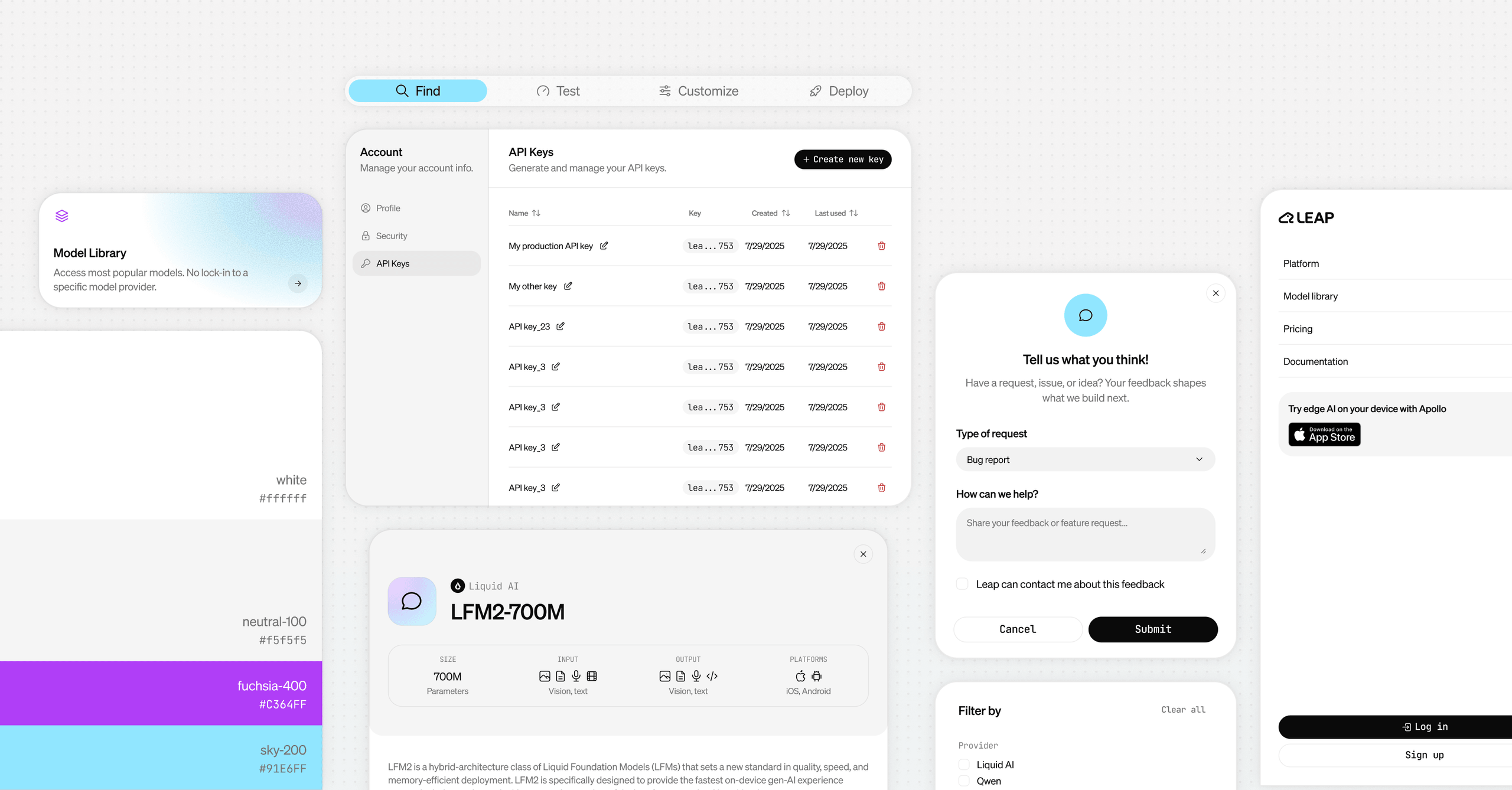

Working closely with the lead front-end engineer, I designed a full design system aligned with the existing tech stack (Tailwind nomenclature and Shadcn components), with accessibility baked in from the start.

This system reduced design–development friction, enabled faster iteration, and made the upcoming rebrand significantly easier to execute. It became the backbone of the product as it evolved.

Designing for speed, clarity, and discovery

LEAP's core value proposition: helping developers quickly find the right model for their use case (chat, text-to-speech, embeddings, etc.). The Model Library was our first major feature.

First iteration: function over form

In just two weeks, we shipped a complete model library with search, filters, downloads, and documentation. Given the pace, we relied on familiar UX patterns and prioritized clarity and speed over novelty.

![[Fig.3] V0 of the model library, pre-design system.](/static/92b957d93b2481afef89a8f2c352f97d/d5e63/model_library_v0.jpg)

Improving and refining

With more time, we revisited the library to improve scanability and reduce visual noise. I adjusted the card layout, simplified filters, and reinforced consistency across buttons, inputs, and selectors.

![[Fig.4] Updated model library, with new design system.](/static/1f73a4bdc79fb20af5b69418ec8ae267/d5e63/model_library_v1.jpg)

Guiding users through model customization without requiring ML expertise.

I worked closely with LEAP’s UX researcher and front-end developers to design a feature focused on model discovery and customization: LEAP Workbench. This project combined exploratory research, hands-on testing with internal developers, and rapid iteration through interactive wireframes.

Design and development happened in parallel, creating a tight feedback loop. Within a few weeks, we shipped a first working version.

1. Upload your data

The core feature of this workbench product is the ability to customize a model to your own data. Users are able to upload a .csv file to the platform to then specify the output they want to get from the model.

![[Fig.5] Users can upload their own data to the platform and specify their "ideal" output.](/static/4c44215ff1e6a69ccb3a9bedab86856c/d5e63/enterprise_customization_1.jpg)

2. Find your model

With the data uploaded, the platform tests multiple models against the dataset and compares the results. This enables us to then recommend a model to the user, based on their specific needs.

![[Fig. 6] Users get matched to the best model for their needs.](/static/25f4b3176cb0d1d43c60f122902bb794/d5e63/enterprise_customization_2.jpg)

Introducing LEAP to the world.

In parallel to product design, Human Agency’s brand team was developing LEAP’s visual identity. This culminated in a full platform and website refresh in early October, aligned with ongoing feature development.

From exploration to production in months, not years.

Over a few intense months, we took LEAP from early-stage exploration to a production-ready platform used by developers worldwide.

We shipped a design system now used consistently across all product surfaces, a developer-facing platform that made Liquid's models accessible to non-ML engineers and a model customization workflow that turned a complex, technical process into an intuitive, guided experience.

What I learned:

- How to design at the intersection of cutting-edge technology and user accessibility

- How to move fast without sacrificing quality

- How to think systemically when the product itself is still being defined

Being part of a team working to democratize advanced AI was both a privilege and a challenge. This project pushed me to think bigger, move faster, and design for an industry evolving in real time.

![[Fig.1]LEAP identified audiences.](/static/c24ada3742f1db7ddec8c7723e0034b3/c17da/target_audience.jpg)

![[Fig.2] LEAP audience description and traits.](/static/336f7057825cc00a75e82e698bd4f3e2/c17da/target_audience_2.jpg)

![[Fig.7] LEAP new landing page.](/static/1e7d9759684f3b5d6525fe892f3ed372/d5e63/landing_mockup.jpg)

![[Fig.8] Revamped model cards.](/static/8c17d3f2d000ddeb57fd3ee9600b045c/c17da/model-cards.jpg)

![[Fig.9] LEAP logo.](/static/a412d00318b75a380397a16220eb2b03/c17da/logo.jpg)